A Dozen More Bytes

Reflections on Jeanette Winterson’s 12 Bytes: How Artificial Intelligence Will Change the Way We Live and Love

The following essay was prepared as a lecture presented at Jornada científica ‘Ética y democracia ante el despotismo algorítmico’, Universitat Jaume I de Castellón, Spain, 17 October 2025. My remarks think with and against the much-discussed book of twelve essays on artificial intelligence by the acclaimed English writer Jeanette Winterson. A wide range of controversial issues is discussed, including the meaning of intelligence, the dangers of anthropomorphism, Moravec’s paradox, state capitalism, digital inequality, and why it is that democratic accountability still really matters in the so-called age of AI.

Jeanette Winterson’s 12 Bytes (2021) is daringly fresh. Written well before the hullabaloo generated by San Francisco-based OpenAI’s launch of ChatGPT (in November 2022), it’s an uplifting provocation – a stylish book of essays by a self-confessed ‘storyteller by trade’ who says she wants to persuade readers that they’d better start thinking about AI and ‘what’s going on as humans advance, perhaps towards a transhuman - or even a post-human - future.’ My mixed reaction to her book sees things differently and takes things further. But it shares and praises Winterson’s fascination with the extraordinary techno-political moment through which our world is living. To hold readers’ attention, 12 Bytes uses syncopation (choppy sentences of irregular length and beat), throwaway lines, folk proverbs and a bit of swanky swearing (‘fuck the binary’, men as ‘dicks with a magic wand with special powers’, etc). Winterson’s storytelling aims to entertain. It wants to bridge the gap between her prose and her readers. The fun is didactic. It wants to instruct, to tell things as they really are. In literary terms, it quite often works, as earlier reviewers concur with handsome puffs of ‘hugely entertaining’, ‘beautifully idiosyncratic’ and ‘very funny’. But there’s a deeply serious concern running through the pages of 12 Bytes. While I am going to say that the book is much too apologetic of some of the many contradictory trends happening in the field of AI, and often blind or silent about other matters, the overall point of the book is compelling. Winterson is a feminist who stands against class arrogance and mansplaining and calls upon her human readers to get smarter. The book encourages readers to step back, to take nothing for granted, to think and act more critically and more wisely in a world that’s far stranger, more contradictory and less set in stone than they might previously have imagined.

1 A New Machine Age Revolution (Revolución de la era de las máquinas)

Winterson’s opening idea is correct. There’s a communications revolution sweeping our world. It’s an AI/robotics revolution whose transformative effects are as profound, say, as the first machine age revolution that erupted in Britain and France in the decades after the 1780s. That period saw the greatest transformation in human history since the invention of agriculture and metallurgy, writing, the city and the state. Not only did inventions such as the flying shuttle, spinning jenny, cotton gin, steam engine, railroad and light bulb reshape the daily lives of millions of people in the Atlantic region and beyond. Their language, ways of thinking and being-in-the-world were forced to make room for a whole new language featuring neologisms such as ‘factory’, ‘industry’, ‘industrialist’, ‘middle class’, ‘working class’, ‘wage slavery’, ‘capitalism’ and ‘socialism’, ‘railway’, ‘scientist’, ‘engineer’, ‘wage slavery’, ‘proletariat’, ‘economic crisis’, ‘strike’ and ‘pauperism’.

Winterson rightly insists that that this new AI revolution is equally transformative. Smart machines are not only radically restructuring our lives in ways both unimaginable to our forebears and too often unnoticed or taken for granted by some contemporary observers. The unfinished AI/robotics revolution gives us a new lexicon featuring terms such as bits and bytes, nanochips, smart phones, quantum computing, cookies, data harvesting, and brain computer implants (BCIs). It arguably cuts much more deeply into our daily lives than did the spinning jenny, cotton gin, steam engine, railroad and light bulb. Importantly, Winterson reminds us that the new revolution has some roots in the first machine age revolution. She recalls figures such as Ada Lovelace (said to have written the first computer programme) and Mary Shelley, author of the famous novel Frankenstein, Charles Babbage’s crank-handled adding machine known as the Difference or Analytical Engine, punch card technology patented by Joseph-Marie Jacquard and Leibniz’s adding and subtraction machine. We could also add quirky inventions such as George Moore’s gasoline-fired ‘Steam Man’ robot, exhibited widely in the United States in the early 1890s. But Winterson also rightly emphasises the novelties brought by what’s been called ‘the Internet of Things’. Wireless connectivity is indeed bringing our intelligence to our thermostats, kitchen appliances, security systems, washing machines and other household items. A new generation of flexible cyborgs, sensuous and in some ways human-like, is arriving. AI robots, tutored and proficient, are everywhere. They’re often invisible; in Rupert Murdoch’s News Corp Australia, 3,000 articles a week using generative AI are published. And the instruments are becoming so small and almost weightless that Berkeley’s Kristofer Pister and others long ago called them ‘smart dust’.

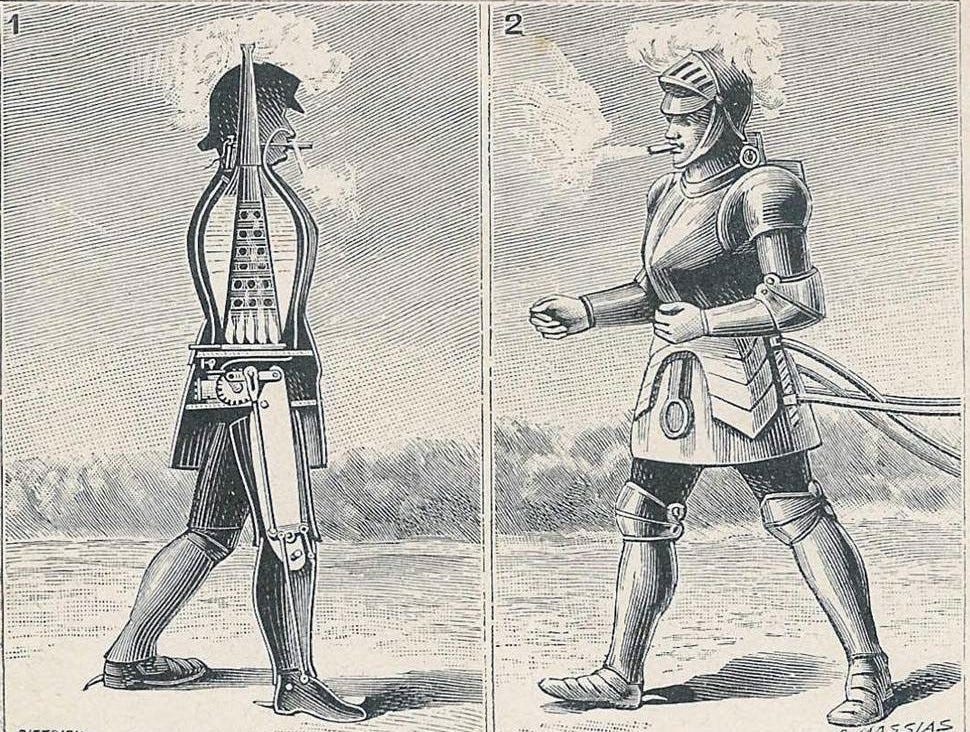

George Moore’s gasoline-fired ‘Steam Man’ robot (1893)

Some of these AI robots operate huge machines with large footprints. Since 2013, the International Space Station has featured Kirobo, Japan’s first robot astronaut. Hands Free Hectare has successfully planted and grown the first arable crop without the intervention of human agronomists or tractor operators. When we fly in a commercial aeroplane, according to a Cranfield University report, only a few minutes of our journey are nowadays controlled manually by pilots. On the Tokyo Stock Exchange, nearly 80% of stocks and shares are traded by algobots. In the People’s Republic of China, Tencent’s WeChat, the world’s most popular standalone mobile messaging app, enables 1.385 billion monthly users to post moments, voice and text messages, to follow other people, browse online shops, and purchase games, products and services in a cashless economy. AI robots now make their presence felt as surgical preceptors, in operating theatres, helping human patients who’ve opted for minimally invasive procedures with less painful micro-incisions. AI robots have hitchhiked across Canada and thumbed their way through the Netherlands and Germany. They have been arrested for buying Ecstasy, Diesel jeans and a Hungarian passport online. Sophia was the first to be granted citizenship (in Saudi Arabia). A while ago, a high-end Californian company called Nvidia caused a stir by generating for the first time photo-realistic (‘deep fake’) images of humans who never existed, so forcing users to take one more step towards a world where ‘objective truth’ becomes a matter for deep debate. In this weird new world, AI robots are even reshaping our sense of comfort and emotional love. If you visit Nagasaki, you can check in to the world’s first robot-staffed hotel, greeted by impeccably dressed, multi-lingual, smiling and blinking, female ‘actroid’ robots, backed by humans employed to handle security and cleaning ‘behind the scenes’. A Japanese company long ago offered Gatebox, a voice-powered 3D female companion who can talk, send text messages, wake you gently in the mornings, dutifully provide weather forecasts, alter your home lighting and order the washing machine to do its job. As Winterson reminds us, ‘digisexuality’ and the AI pornification of women are also products of this unfinished revolution. Sex dolls with patented animatronic talking heads with programmable personalities – Harmony is a market leader in this male fantasy world – are capable of ‘ro-gasms’, she writes.

2 Human nature? Humanity? (Naturaleza humana)

Although Winterson sometimes speaks of AI tools as ‘neutral’, or says things like ‘there’s no need to be afraid of the technology – it’s how we use it that matters’, 12 Bytes illustrates by default that something much bigger and more complex is going on. Conventional notions of ‘human nature’ and ‘humanity’ are under siege. Smart machines, programmed through algorithms and tapping into huge data bases, aren’t to be understood as mere ‘add on’ technical extensions of our human capacities. We could say that smart machine systems are a new medium of communication that’s reshaping how we so-named humans perceive, understand, negotiate and move within the world around us. The Canadian scholar Marshall McLuhan, if he were still in our midst, would warn that we humans have a poor understanding of the elementary principle that the printing press, radio, television and other previous communication media weren’t neutral tools. They formed and shaped their users. The ‘medium is the message’, he famously said. He’d nowadays probably emphasise that the unfinished AI/robotics revolution is radically redefining what it means to be ‘human’. In the future, as the revolution deepens, he might say, intelligent machines are bound to continue adding and subtracting, amputating and extending, chiselling and hewing our bodies, ways of thinking, our embodied sense of space-time.

Marshall McLuhan (1973)

Consider for a moment the colonisation of our lives by mobile phones. We no longer thumb through phone books in phone boxes, memorise the numbers of family and friends, buy newspapers, listen to the wireless, sample vinyl music on turntables, hump around ghetto blasters, lose our way in city streets, or carry Kodak Brownie cameras. Smart tools are transforming our everyday lives. AI robots are having McLuhan effects. The hand of space-time connectivity has been strengthened. So profound are the upheavals in the way we experience space-time settings and live and view ourselves that it becomes clear that the spirit and substance of what we call ‘the human’ isn’t a fixed and stable entity. But Winterson’s fine book reminds us that there’s a flipside to this point: the unfinished revolution is making clear, and we should see, that generic talk of ‘AI’ is misleading, and that there are no such things as ‘AI robots’, pure and simple. It’s true that AI robots can be defined in the abstract as artificially intelligent machines equipped with software designed to carry out specific pre-programmed tasks and to draw upon large data banks to learn for themselves how to reach conclusions. But one lesson provided by Winterson’s book is the need to think of ‘AI’ using more complex taxonomies. Taxonomies, as the French sociologist Pierre Bourdieu reminded us, are political in their effects. The most powerful big-tech corporations, such as Nvidia, Microsoft and Apple, have a material interest in portraying AI as a homogenous, catch-all category. Their efforts to monopolise classifications should be refused. We must stop speaking of ‘AI’ and ‘robots’. We should avoid the mistake (evident in Nicholas Carr’s The Glass Cage: Automation and Us [2014]) of lumping together different intelligent machines, and their effects on humans. Automated thermostats in our homes aren’t the same as war games such as Paik in Battlefield 2042 or the turquoise-pigtailed, music superstar Hatsune Miku, the three-dimensional hologram voicebank who’s opened for Lady Gaga and who performs live before sell-out audiences who call themselves Mikuheads; or ‘Tilly Norwood’, the Gen Z influencer who posts on Instagram courtesy of AI startup Particle6; or identical with Aitana López, a virtual influencer created by the Barcelona company Clueless, a pink-haired 25-year-old who reportedly earns up to 10,000 euros per month posting selfies from concerts and her bedroom. Automated cars render earthling drivers superfluous; biometric technologies augment the human ability to recognise faces; Alibaba’s City Brain is a cloud-based system designed to reduce city traffic jams; song identification apps like Shazam, AI music generators such as Spotify’s Creator Technology, and computer-inspired images in the arts enabled us to do things we couldn’t have done before. Amidst this new carnival of complexity, Winterson reminds us that one thing’s for certain: this AI/robotics revolution, crammed with instances of automation, innovation, augmentation and control, whether we like it or not, is forcing us to re-consider what it means to be human.

Aitana López

3 Anthropomorphism and alienation (Antropomorfismo y alienación)

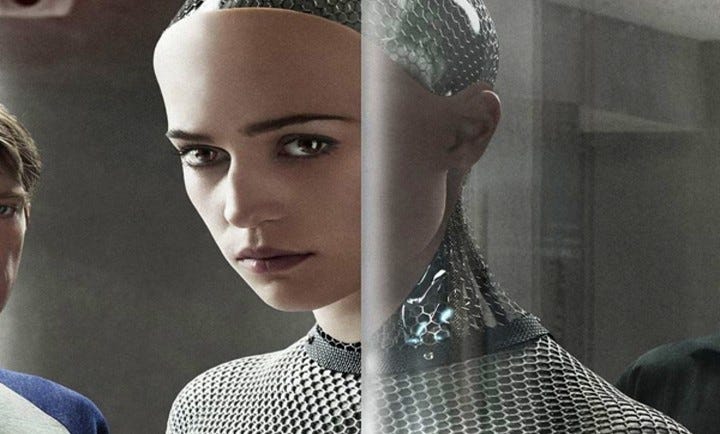

Read in one way, Winterson’s 12 Bytes is a swingeing critique of our propensity for collective narcissism. We human animals have a bad habit of hypnotising ourselves then blindly projecting ourselves onto objects around us. We give names to extreme weather events, dress animals in our clothing; some men confuse their own genitals with the fancy cars they drive. We have a collective talent for anthropomorphism, the unfortunate habit of losing ourselves within our own creations. Often unthinkingly, we then praise or blame those same objects for being the source of our bliss and liberation, or our misfortune and misery. This is an old human custom and habit. The ancient Romans and Greeks represented their deities through human forms and qualities, such that gods and goddesses rode horses and chariots, fell in love, married and had children, feasted on exotic foods, wielded weapons and fought furious battles. We modern humans have long indulged the same habit. In creations from Faust and Frankenstein to the apocalyptic The Terminator series and quite recent films such as Spike Jonze’s Her (2013), which features a humanoid robot named Ava, or Alex Garland’s Ex_Machina (2015) and Blade Runner 2049 (2017), we display a remarkable penchant for representing our hopes, dreams, anxieties and nightmares through objects of our own making. A generation ago, I recall, some people feared that digital televisions were the portals through which evil weevils entered our homes. More recently, people have worried that our children’s cuddly toys are being used to record and broadcast private conversations; or that the fridge freezer connected to our smartphones will be hacked by the NSA or Chinese government spies; or they have predicted that as AI takes over the world ‘probably none of us will have a job’ and that ‘AI and the robots will provide any goods and services that you want’ (Elon Musk at the VivaTech 2024 conference in Paris).

Ex Machina (2014) science fiction psycho-thriller film directed by Alex Garland

When it comes to artificially intelligent machines, latter-day anthropomorphists seem limitless. They routinely speak of AI as if it was a person and blame ‘AI’ for everything under the sun. They consult chat bots as if they are oracles equipped with bottomless stores of knowledge and wisdom. Anthropomorphists also ask whether the price of machines that think is humans who don’t. They fear the ‘erosion of skills, a dulling of perceptions, and a slowing of reactions’ (Nicholas Carr). They accuse AI robots of causing job losses in the labour market, shrinking the middle class and swelling the ranks of the poorly paid and unemployed. They complain that intelligent machines are incapable of love, or affection. Or distinguished writers like Ian McEwan - think of his Machines Like Me (2019) - pen novels about the sensual mayhem AI triggers when robots invite us to form love triangles with humans. Anthropomorphists say AI robots are turning humans into powerless infants. They fear humans are drowning in the banality of a wireless world. To which Winterson helps us reply: the bad habit of anthropomorphising things is people’s way of disguising their own anxieties and fears by projecting them onto ‘AI’. In fudging the aetiology of their hopes, laments, anxieties and fears, they display the symptoms of what a 19th-century hirsute human named Karl Marx called self-estrangement, or self-alienation (Selbstverfremdung).

4 Human Arrogance (Arrogancia humana)

Winterson’s 12 Bytes calls into question the corollary of human anthropomorphism: the presumption that homo sapiens is the cleverest worldly creation and rightful master of planet Earth. Humans are such strange creatures. Their arrogant will-to-power is the flipside of their propensity for self-estrangement. They lose themselves hypnotically in the machines of their own making then they suddenly recoil and rejoice in the opposite recognition that after all they are the designers and builders of AI robots, so proving their own evolutionary superiority. Hence the strange flip-flops when delivering verdicts on whether AI robots are on balance good or bad for the human species.

Please let me illustrate. Some commentators are incurable hyper-optimists. Supposing that in the coming decades all will be the best in the best of all worlds, they fancy ‘post-humanity’ as the triumphant destiny of homo sapiens. They fantasise the time is nigh, within this century or next, when smart humans will morph into super-smart cyborgs. ‘In the metaverse you’ll be able to do almost everything you can imagine’, boasted lizard boy wonder, Big Brother Zark Muckerberg. Winterson hedges her bets, but she’s also fascinated by the possibility that the metaverse, ‘a place to be open-minded and see what [being] human feels like when it doesn’t feel confined to a single physical self in a time-bubble’, is ‘the next step on our evolutionary journey’. Along the same lines, the renowned astronomer Martin Rees predicts interplanetary and interstellar space will be the domain in which super-intelligent robots flourish. Developing capacities ‘as far beyond our imaginings as string theory is for a monkey’, AI robots could represent a human victory in humans’ struggle to comprehend and colonise the universe. ‘Our earth’, the good astronomer says, ‘though a tiny speck in the cosmos, could be the unique “seed” from which intelligence spreads through the galaxy.’ Similar conjectures are made by Jerusalem-based pop star Yuval Noah Harari, who predicts that the amalgamation of humans and machines will be the ‘biggest evolution in biology’ since the emergence of life four billion years ago. Driven by dissatisfaction with the way things are and desirous of an ‘upgrade’, at least some humans would evolve, take a giant leap towards ‘a divine being, either through biological manipulation or genetic engineering or by the creation of cyborgs, part organic part non-organic’.

Then there are the futilitarians who fear the loss of our precious humanity. They recognise just how astonishing are the ingenuity and wide-ranging boldness of the innovations taking place, but they rage against our intelligent machines. They fear a future in which humanity is gobbled up by intelligent design. Winterson doesn’t say this, but it needs to be said: human pessimists and human optimists live in the same discursive world. They are common believers in human superiority. Rarely do the miserable and the cheerful define what they mean by ‘human’ but, when they do, platitudes and contradictory but complementary definitions are commonplace. Consider the first-ever human use of the word ‘robot’, credited to the Czech writer Karel Čapek (1890–1938). His R.U.R. Rossumovi Univerzální Roboti (Rossum’s Universal Robots [1920]) portrays a world overrun by blue-uniformed, intelligent robots who once had seemed happy to work for human profiteers. The ‘bad human’ robots then change their minds. Crushing their despised human predators, they establish a Government of the Robots of the World. But then the strangest thing happens: for the first time, the robots notice the beauty of nature. The contradiction between misery and joy is resolved. The robots learn to laugh, and to love. They come to value the dignity of work. They grasp the error of the ways. They learn how to learn. They become ‘good’ humans.

Strikingly similar acrobatic displays of human arrogance and efforts to juggle opposites are evident in the public alarm triggered in recent years by the fast-paced entrance of AI/robotics technologies into the mainstream of the unfinished digital communications revolution. Critics of these technologies regularly note how the rapid profusion of AI-generated tools such as ChatGPT4 and Bard, deep fake imagery, false telephone call technologies, and text-to-video techniques, are corporation driven and still mainly unregulated by governments. They rightly observe that these remarkable technologies draw upon large data sets extracted by corporations and governments from digital users without their consent or concern for their privacy. Anxiety about the spreading use of these and other big data, large language tools, the fearful talk of ‘powerful digital minds that no one – not even their creators – can understand, predict, or reliably control’ is accompanied by warnings about their socially and politically damaging consequences. ‘Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization?’ ask the signatories of an open letter (March 2023) calling (disingenuously) for a pause to ‘giant AI experiments’. In the absence of local, regional and global regulations and safeguards, other observers infer, many AI tools can be misused by businesses and governments to disrupt, eclipse and destroy the existing ecology of open and trusted media platforms and freedom of speech so vital for democratic politics.

5 Questions of Super Intelligence (Superinteligencia)

Winterson is fascinated by the way programmers, designers and corporate executives are busily trying to deploy machines that they say have not just the ability for pre-programmed intelligence but also the capacity for deep learning and super intelligence. There’s in a way nothing new here. Long ago, there were predictions that there would be machines cognitively cleverer than ourselves. And the prediction has come true: the artificial neurons of some AI robots already operate millions of times faster than our human brains. Things will accelerate when quantum AI comes on the scene. Whatever happens in future, we’ve reached the point where there are superior computational architectures and learning algorithms that can easily be copied, almost instantly, without having to learn from our predecessors all over again. Much more remarkable is that we’re now capable of deep learning, the practical application of sets of algorithms known as neural networks. Modelled loosely after our brains, deep learning machines perform much more than task-specific operations. Unsupervised by humans, these machines get on with the job of spotting patterns within vast quantities of otherwise unclassified raw data. They learn to classify very much faster and often much more inventively than human users, and that’s why they are so useful in such human fields as speech recognition technology, social network filtering, diagnostic medicine, chess and other boardgame programmes.

I.J. ‘Jack’ Good (1916 - 2009)

This remarkable capacity to learn shouldn’t come as a surprise, Winterson reminds us. The inference that ever smarter, self-taught machines would one day exceed human capacities was drawn more than half a century ago by I.J. ‘Jack’ Good. The British mathematician and cryptologist, who worked with Alan Turing at Bletchley Park, spoke of the ‘intelligence explosion’ that would be produced by smart machines capable of designing even smarter machines. Human intelligence would then be ‘left far behind’ by the ‘first ultra-intelligent machine’. By virtue of its superior ingenuity, it would serve as ‘the last invention that man need ever make’, he said. There are experts who take their cue from Good and jump for joy at the growth of artificial intelligence. They wax eloquent about the explosion of computing power produced by advanced self-modifying machines that learn iteratively how to improve their reasoning capacity in ever-faster cycles. While there are some experts who joke that the breakthrough to artificial super intelligence has been 15 years away for the past half-century, truth is amazing things are happening within the AI robotics field. Once, millions of people talked about the IBM computer that toppled the grand master of chess, Garry Kasparov, at his own game in 1997. Many observers were impressed by the clever human algorithms that won the 2011 US quiz show Jeopardy! Four years later, AlphaGo became the first robot using tree search algorithms to learn by machine learning how to beat a human professional Go player, on a full-sized board, without handicaps.

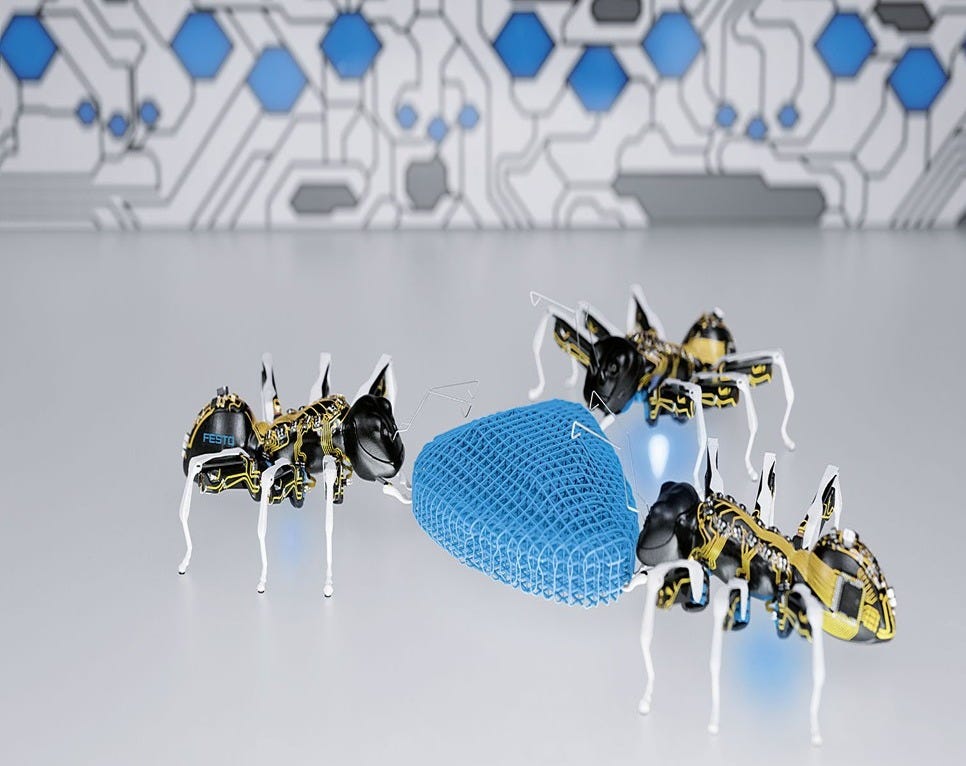

These breakthroughs in robotic intelligence proved to be just the beginning. Consider for a moment the field of collaborative robots, or ‘cobots’. For the world’s factories of the near future, the German company Festo has designed ‘bionicANTs’, tiny 13.7 centimetre-long cyberinsects capable of ferrying items and working autonomously and co-operatively with fellow factory ants. The Swiss multinational company ABB has developed ‘YuMi’ - short for you and me working together - a dual-arm, sentient, vision-guided assembly robot that is so dextrous it can do everything from threading a needle to handling the components found in tablets and mobile phones. And there’s the ‘gentleman cyborg’, a charming domestic cobot that waits hand and foot on humans round the clock, as it demonstrated in its 2015 world premiere at the Hannover Messe. Even more intelligent cobots and AI deep learning machines are surely on their way and are bound to reshape the way we live.

6 Moravec’s Paradox (La paradoja de Moravec)

Winterson’s 12 Bytes is disappointingly silent about the practical barriers to the perfection of what today is loosely called artificial intelligence. Let’s think for a moment of Pepper, the iconic ‘emotional robot’ that sold out a few years ago within a minute of going on sale to the general public in Japan. Created by Aldebaran Robotics and Japanese mobile giant SoftBank, Pepper was touted as ‘the first humanoid robot designed to live with humans’. According to news releases, Pepper, who stood just over a metre tall and moved on wheels, could pick up on human emotions and create its own by using an ‘endocrine-type multi-layer neural network’ displayed on a tablet-sized screen on its chest. Pepper’s touch sensors and cameras were said to influence its mood. S/he could express ‘joy, surprise, anger, doubt and sadness’ and would audibly issue a sigh when unhappy. Yet its manufacturers didn’t say whether Pepper had the capacity for irony or nostalgia or wishful thinking, or whether they could shake with excitement, palter, feel fear or simply draw back and deliver a swingeing ‘No!’ to humans. In reality, Pepper had no such capacities, and they were withdrawn from the market in 2020.

Here the key issue is whether science fiction fantasies of super intelligent artificial machines can ever come true. Let’s set aside the tricky matter of whether or how super intelligent machines could robotically source and assemble the physical materials from which they are manufactured. The broader truth is that AI robots are still actually quite dumb. The Russian digital czar Dmitry Itskov, the founder of New Media Stars, is reportedly planning to make a digital copy of the human brain by 2045, but the fact is that for the foreseeable future AI robots, as the famous maker of robots Hiroshi Ishiguro has observed, will continue to have ‘insect intelligence’.

There are many reasons for this. Tacit knowing and tacit knowledge, the deep reservoirs of savoir-pouvoir-faire that humans regularly draw upon when we go about our daily lives, don’t come easily to code writers. A decade ago, Nick Bostrom’s Superintelligence: Paths, Dangers, Strategies (2014) and Stuart Armstrong’s Smarter Than Us: The Rise of Machine Intelligence (2014) pointed out that artificially intelligent robots capable of understanding and serving humans are well beyond our reach, and will most probably remain so indefinitely. Yes, smart machines can now process information much more efficiently and rapidly than human brains. So far, however, whatever the corporate hype, programmers have been unable to think up algorithms capable of capturing and expressing the deep reservoirs of common sense that all humans routinely draw upon in everyday life.

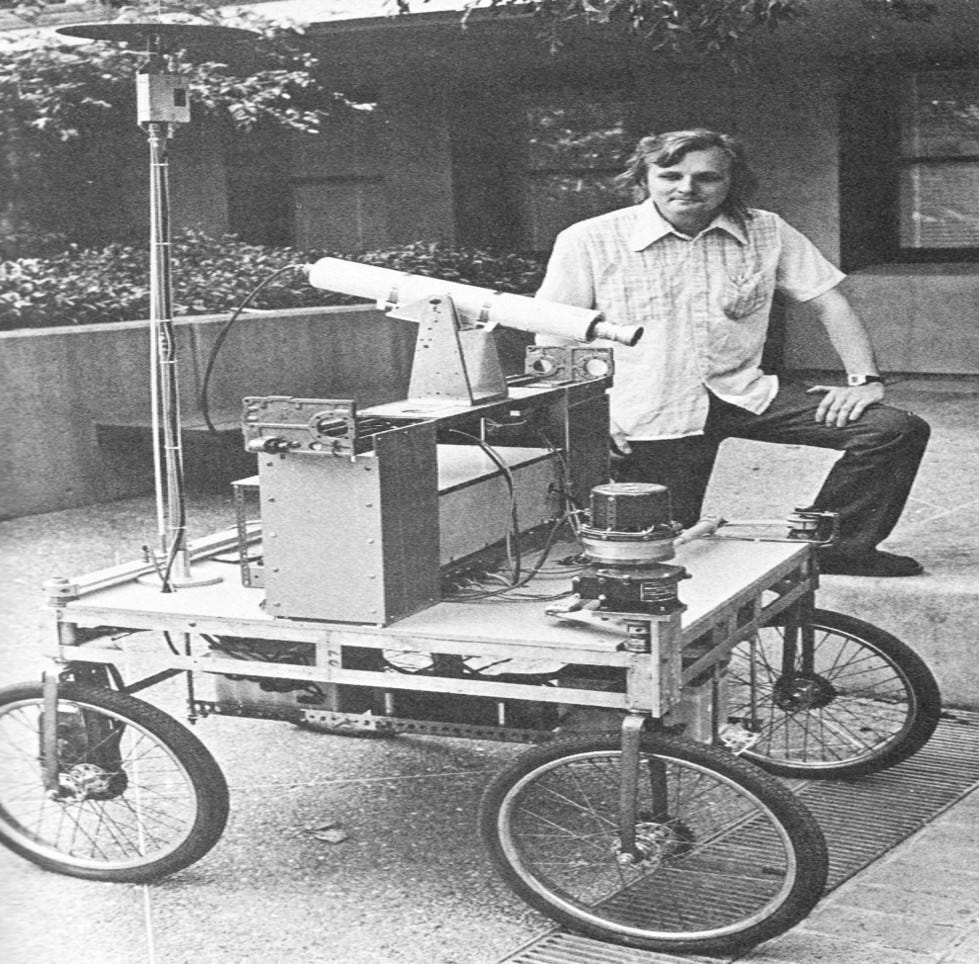

Hans Moravec, working with an early mobile robot, the Stanford Cart (c 1977)

Winterson doesn’t mention a basic paradox named after Austrian-born Canadian roboticist Hans Moravec, who has pointed out that AI robots are skilled when performing abstract, high-level computations but clumsy and brain-dead incompetent when it comes to handling everyday interaction with you. ‘It is comparatively easy to make computers exhibit adult level performance on intelligence tests or playing checkers, and difficult or impossible to give them the skills of a one-year-old when it comes to perception and mobility’, he wrote four decades ago. The point could be put differently. AI engineers, 80 % of whom are men Winterson tells us, have so far failed what’s called the Turing Test: as programmed machines they evidently cannot replicate human-quality interpersonal skills. Getting things right in the ‘uncanny valley’ that lies between machines and humans isn’t easy. What Google calls ‘ambient computing’ produces disconnects. The Meta debacle with Galactica, designed to assist scientists but withdrawn after 3 days of intense public criticism, is something of an allegory of the Turing Test failure: it illustrated the hubris and limitations of large language models (LLMs), so confirming a huge body of research which underscores the limitations of AI, including its tendency to reproduce banalities, orthodox prejudices and outright falsehoods.

There’s additionally the problem that algorithms capable of dealing with norms, means-ends calculations and Solomonic judgements have still not been designed. How can human coders equip us with the ability to decide how best to decide when faced with two, or three, or more conflicting courses of action? Should a driverless vehicle risk a pile-up by slamming on its brakes to avoid a pedestrian or (as the driverless shuttle operated by the French company Keolis discovered during its first day of operation in Las Vegas) a negligent human driver? What if a driverless taxi collides with a fire truck or causes traffic jams or drives off piste and ends up stuck in wet cement (as happened during their first weeks of operation in San Francisco)? What if a do-no-harm ethic results in evil effects? Are intelligent robots capable of handling aporia, dilemmas, serendipity, cul-de-sacs, unintended consequences, counterfactuals and unexpected good fortune? No. That’s why machine learning experts indulge euphemism. They speak of ‘bottlenecks’ and ‘barriers’ and warn of the need for sets of algorithms known as ‘ethical governors’. In effect, these experts confess that human rules that pre-decide how AI robots should behave in particular space-time settings are necessary, unavoidable and that they should be mandatory.

For the sake of profit, power and fame, other champions of AI simply ignore Moravec’s paradox. What they do not tell us is the way artificially intelligent tools of communication are plagiarism and prediction machines: they are closed systems structured by fixed sets of probability rules that aim to mimic and borrow from and predict existing human behaviour and its biases. For good reasons, the computational linguist Emily Bender has recently called for replacing the acronym AI with the word automation. Her important thesis is that the ‘deep fake’ image generation tools (such as X’s Grok2) and chatbots created by OpenAI and rivals such as Elon Musk’s xAI, Anthropic, Meta and Google and the Chinese company DeepSeek are no more than ‘stochastic parrots’. These tools ingest huge volumes of data and base their responses to our questions on the statistical probability of certain words or images occurring alongside others. Bender has compared large language models (LLMs) to a hyper-intelligent octopus eavesdropping on human conversations: they are capable of spotting statistical patterns but they are simply incapable of understanding intent or meaning or grasp anything beyond what it had observed and heard. Code writers indeed produce systems for ‘haphazardly stitching together sequences of linguistic forms it has observed in its vast training data, according to probabilistic information about how they combine, but without any reference to meaning.’ But OpenAI boss Sam Altman’s recent boast that AI will soon ‘discover new knowledge’ and Anthropic’s Dario Amodei’s claim that next year there will be AI tools that are ‘smarter than a Nobel Prize winner across most fields’ are plain nonsense. They somehow suppose there’s a metaphysical mind behind the software and its texts when, in reality, it’s the human coders perfecting the arts of parroting who are trying to persuade us that they know us better than we know ourselves, and that cannot ever be.

Emily Bender (1973 -)

Moravec’s paradox highlights a political problem. AI code writers claim to be perfecting the arts of ‘alignment’ of humans and super-intelligent robots, but the new technologies are inevitably based upon the less-than-smart but manipulative mirroring of humans’ likes and dislikes, cognitive abilities and felt emotions. In the wrong hands, measured in power terms, these tools have despotic qualities: they are designed to induce a strange new form of voluntary servitude among users. Corporate coders seek to persuade us of their ‘human’ and ‘superhuman’ qualities even though, in practice, they fall well short of recognisably human standards such as thoughtful intentionality, which no code writer is capable of replicating. AI robots can’t generate or fully understand sarcasm or irony, wilfully peddle lies, take leaps of imagination or make ethical judgments. It turns out that the new artificial intelligence tools are unintelligent artifices. They are too clever by half. They proficiently fabricate texts, sounds and images. They do big data calculations at lightning speed. They can pen poetry, craft scholarly texts, write computer code and respond to users’ requests for information. But they can’t think. Their digital minds are brainless. Therein lies their profound danger: they are the latter-day equivalents of the sorcerer’s broom described in Johann Wolfgang von Goethe’s famous poem Der Zauberlehrling (1797). They are broods of hell, originally human spirits who come to ride roughshod over human commands. As machine learning algorithms are spread by companies and governments through social and political life, shaping access to everything from news and entertainment to access to credit, insurance, jobs and housing, so the illusion of thinking, smart, ‘super intelligent’ machines gains ground. They are praised for making life smarter, and easier. But by colonising our lives, genuine thinking and judgment making by flesh and blood humans are eliminated.

7 Intelligence multiplied (Inteligencia)

A major weakness of Winterson’s 12 Bytes is its failure to define the keyword intelligence and to recognise that there are many different and heavily contested meanings and forms of ‘intelligence’. As a self-confessed ‘enthusiast for AI’, Winterson makes the case for replacing the phrase AI with ‘alternative intelligence’. It’s unconvincing.

For decades, research by Howard Gardner and other human psychologists and educators has highlighted the way in which humans’ inherited and learned capacity to think is not singular but differentiated. We humans are creatures of ‘multiple intelligences’. There are humans who have a way with words. They learn the art of intelligent oratory, or easily understand and speak other languages, or master the arts of thoughtful reading. They are gifted linguists. Other humans display an unusually thoughtful feel for the biomes in which they dwell. Ecological intelligence is their thing. They skilfully recognise different flora and fauna and intuitively understand the complexity and fragility of living and non-living environments. They think of themselves as deeply attached to their planetary surrounds, and express indignation and voice intelligent criticisms of the destruction of lands, forests, waters. Some individuals display a special knack of thinking abstractly and competently handling numbers and formal logic. There are dancers, athletes, builders, actors and other people whose kinaesthetic acuity is unusually sharp. Their bodily grace, self-disciplined agility and learned sense of timing and perfection are remarkable. Not to be overlooked are those musically talented individuals endowed with a quickness of mind, a sharp grasp of tempo and tone, the ability to spot and re-create absolute pitch, an intelligence for sound, rhythm, structure and audience reception. And, as the philosopher Martha Nussbaum reminds us, we should not underestimate the capacity of humans for developing the intelligence of emotions. Think for a few seconds about the case of women in conversation and thinking through why they’re angry about their maltreatment by men in their workplace. Their reasoning is situated, embedded both in language and deep background bodily emotions, such as their feelings of self-respect and judgments that their own flourishing is vulnerable, and that they are being robbed of their well-being. Their anger at indignity reminds us that intelligence can’t be synonymous with the work of male coders sitting in offices or superior minded professors who linger in libraries and proclaim universal truths from ivory towers distant from everyday life.

8 Hallucinations, or Robot’s Cramp (Alucinaciones)

Some experts rightly warn of possible catastrophes caused by people’s inability to control or communicate with super intelligent machines in the hands of a few. Think of the ominous portents: the first-ever (in 1971) American worker to be killed when a Ford assembly line robot arm slammed into him; or (in 1981) the Japanese engineer at a Kawasaki factory who was pushed into a grinding machine by a broken robot arm he was attempting to repair; or the young human worker crushed to death by a robotic arm in a Volkswagen plant in Kassel, north of Frankfurt. Now consider the intensified use (since 2024) by the Israeli occupation army of quadcopter drones as tools of aerial surveillance, psychological intimidation, the destruction of buildings and the murder of civilians seeking refuge after being forcibly displaced. Many incidents have already been documented in which crop-spraying Agras quadcopters produced by the Shenzhen-based Chinese company DJI have been modified and used to broadcast eerily distressing sounds designed to spread fear and panic among Palestinian civilians. Redesigned quadcopters have entered crowded homes at night, hovered within rooms, filmed sleeping families, and then exited through windows, leaving whole families terrified in displacement zones turned into death traps. It’s easy to imagine forthcoming wars in which AI robots are the principal combatants doing the dirty work of wiping out whole armies, on both sides, and whole cities and their citizens as well. At least 55 governments are currently developing killer robots for use under battlefield conditions. Ukraine’s Victoria Shi is the first AI spokesperson used by a foreign ministry under wartime conditions. The Chinese government has already stationed Sharp Claws killer robots on its high-altitude border with India. The distinction of launching the first AI war goes to Israel in its 2021 assaults on Gaza; more recently, it has used booby-trapped pagers to kill Hezbollah supporters and the Habsora (‘The Gospel’) AI system to generate intelligence data for the purposes of choosing specific targets for attack and predicting the likely number of civilian casualties in Gaza.

Countries like the US, China, South Korea, Russia, Australia, India and the UK are meanwhile investing heavily in drones and other advanced AI weapons that have the ability to choose and attack their own targets – without human control. All this even though AI robots can’t make complex ethical choices, don’t come equipped with compassion and understanding and, thanks to their coders and designers, make decisions that are incurably biased and flawed. Just as text predictors regularly get things wrong and emerging facial and vocal recognition tools often fail to recognise women, people of colour and persons with disabilities, so autonomous weapons are incapable of replicating human-style, context-sensitive judgments. What’s equally worrying is not only that the replacement of troops with machines makes decisions to go to war easier. Through battlefield capture and illegal arms trading, these weapons are bound to fall into the wrong hands. The alarm of AI researchers who are currently petitioning against the further development of autonomous weapons is understandable, and laudable. Yes, there are occasions when autonomous weapons can serve socially useful purposes, as Dallas police officers proved (in 2016) by deploying a bomb-equipped robot to kill a sniper. Yet autonomous weapons have the potential to become the Kalashnikovs and car bombs of the future. Capable of selecting and engaging targets without human intervention, AI robots require no costly or hard-to-obtain raw materials. They’re potentially cheap to mass produce. That’s why they’re ideal weapons for money- and power-hungry warlords and thugs as well as despots wishing better to control their subjects. Winterson is strangely silent about the global arms race in dangerous battlefield robots whose use is pushing us into a dystopic world where robots lose their minds, hallucinate, go off their heads, suffer a form of epilepsy that Karel Čapek in R.U.R. called ‘robot’s cramp’.

9 Capitalism (Capitalismo)

The campaigns by Amnesty International against AI surveillance, lawsuits against data theft, petitions by leading AI human researchers and moves by United Nations officials to ban killer robots should remind us that power is an indispensable category for making sense of the unfinished AI robotics revolution. Brazenly ignoring the limits of machine learning, there are governments and corporations actively designing robots for the purpose of manipulating, controlling or eliminating human beings. Winterson notes a worrying trend: the pre-programmed subservience and mechanised pornography of sex robots – 95% of the industry is geared to men – that spread masculinist stereotypes, promote the commodification of women’s bodies and increase levels of male violence against women. Facial recognition technology can feed surveillance states, as in China, where alleged criminals are spotted and apprehended at public events, train stations and (in Hangzhou) fast-food outlets. These are sinister trends. They should pose challenges to technology assessment experts, who typically shy away from political questions, as the critic Evgeny Morozov has wisely pointed out. More comfortable in the confined worlds of literature, neuroscience and pop psychology, technology assessors typically leave little or no room in their analyses for corporations and states, parliaments and judiciaries, political parties, lobbyists, social movements and civic struggles. Everything is reduced to technophilia, technophobia or something in between. It’s as if - misleadingly - robotics and artificial intelligence operate in power-free zones, untainted by human struggles over who gets how much, when and how. So the obvious should be re-stated: the public matter of who decides who manages to win control over people and the institutional systems of power in which we live, work and play, is emerging as among the greatest political questions in these early decades of the 21st century.

The matter of power and its maldistribution is central to understanding the connection between the unfinished AI revolution and capitalism. Winterson says she ‘admires’ capitalism, but it’s worth remembering (as Ralf Dahrendorf liked to emphasise) that when thought of as a mode of profit-seeking production, exchange and consumption, capitalism comes in several different forms: European Commission-style regulated capitalism; heavily militarised American big tech cowboy capitalism; and the Chinese model of party-state-controlled capitalism. Despite their substantial differences, these political economies share a common commitment to the protection of huge corporations, the creative destruction of old technologies, risk taking and profit seeking, and the monetising of people’s daydreams and daily lives by animal-spirited big tech companies. These corporate giants are greedy animals moved by the spirit of greed: Winterson tells us that 1% of Meta’s revenues are spent on wages. Amazon makes $10,000 a second; that’s $600,000 a minute and $36 million per hour. These giants are currently generating potentially dangerous market bubbles: prior to the launch of ChatGPT, OpenAI and Anthropic were in stock market terms valued at only a fraction of their current $500 billion value. Secrecy and manipulation and total control are their thing. So are advertising campaigns, what Winterson nicely calls ‘weapons of mass distraction’, some of them dressed up as scare campaigns like the already-mentioned 2023 ChatGPT media sensation engineered by Sam Altman, his subsequent announcement of an updated 01 version capable of coherent ‘chain-of-thought’ reasoning, or the razzamatazz generated by the narcissistic tech mogul with a Messiah complex Elon Musk when announcing the launch of XAI, a new chatbot he claimed would prove to be a ‘maximum truth-seeking AI that tries to understand the nature of the universe’. Winterson understandably speaks of the corporate ‘behavioural nudging’ that’s designed to get users to spend more time on company platforms, part with their personal data and rights to privacy, turn themselves into ‘digital drug addicts’ and buy stuff advertised on their sites. But Winterson then qualifies the point by claiming that, in the end, ‘the evil is in our hearts’. The claim is unconvincing: it is the new state-backed data-harvesting and surveillance capitalism that thinking, public-spirited citizens should greatly fear.

10 Digital Inequality (desigualdad digital)

Despite its thoughtful literary eloquence, Winterson’s 12 Bytes has surprisingly little to say about the problem of digital justice – the unavoidably political problem of who legitimately decides which people benefit from the unfinished AI robotics revolution? Winterson’s neglect of the matter of justice is regrettable, if only because the time may be coming when some governments and corporations manage in the future durably to monopolise the design and application of artificial intelligence. The result? A privileged class of poligarchs would exercise an unfair hand in deciding the future design and practical deployment of AI robots. The ideals of ‘algorithmic accountability’ (Frank Pasquale) and ‘algorithmic democracy’ (Domingo García-Marzá and Patricio Calvo) would be crushed alive.

When considering the matter of justice, it’s a grave mistake to suppose or say that there’s a divine or ‘natural’ logic to the development of artificial intelligence. AI robots are nowadays not in charge of AI development; for the moment, and well into the future, autopoiesis is neither their destiny, nor their privilege, nor their burden. Intelligent robots are simply incapable of producing, reproducing and maintaining themselves. It’s equally mistaken to suppose that a pre-political category called ‘humans’ is currently overseeing the development of AI robotics. Robotics is much more than a technical question. It’s a matter of politics. To state the obvious: the research and development of AI robotics isn’t governed by ‘humans’. Empty generic signifiers of that kind are most unhelpful. Powerful states and profit-hungry corporations like Google and Alibaba are in reality our masters. It may be (as Max Weber would have argued) that the push-pull rivalry between states and corporations turns out to be a source of salvation for citizens. But, for the moment, the big corporate and state money and publicity backing the new AI machine age revolution is twisting its trajectories and substance heavily in their favour.

In matters of justice, some trends, not surprisingly, are decidedly threatening of many people’s dignity. Enshittification (Cory Doctorow) is among the observable pathologies. Consider, too, the impact of AI automation on the labour markets of capitalist economies. Some say that the new machine age has barely begun, that we are living in times comparable to the year 1780, nearly a lifetime after the invention of the steam engine (1712) but two decades before the first commercially successful, steam-powered railway journey (1804). Data-crunching human economists already agree that the application of artificial intelligence to labour markets is intensifying, in China as much as in Europe and North America, even though they can’t reach consensus on the longer-term data trends. Gloomy forecasters predict a dystopian future of capitalism-plus-robots that triggers a new wave of ‘technological unemployment’. Fears are growing that AI robots are ‘eating jobs’, destroying the work ethic and slowly ruining the future life chances of citizens. A decade ago, an influential report by McKinsey Global Initiative predicted that by 2055 intelligent robots could halve all work done globally by humans (which humans it didn’t say). More recently, computer scientist Geoffrey Hinton has claimed that ‘AI will make a few people much richer and most people poorer.’

We should pay attention to such forecasts, for amidst the conflict of interpretations one thing is crystal clear: the application of automated intelligent machines to our political economies is widening the gap between the rich and the poor. It’s not just that many jobs are at risk (in the core fields of manufacturing, accommodation and food service and retail trade, 51% of current US employment is at high risk of automation, say McKinsey analysts). Fractious polarisations are happening within the overall work force. There’s a widening gulf between data scientists, artificial intelligence programmers and other high-end, high-skilled, well-paid jobs, which are likely to remain plentiful, and low-skilled and poorly paid personal service sector jobs, which are most at risk. Recall old Aristotle’s dream of a world in which ‘the shuttle would weave and the plectrum touch the lyre without a hand to guide them’, a polity in which ‘chief workmen would not want servants, nor masters slaves’. Now ponder for a moment an Amazon warehouse run and organised by bright orange Kiva robots. Next imagine a future economy in which 0.1 percent of humans, corporate employers and their shareholders, control the machines, the remaining lucky 0.9 percent administer us, and the rest of the population, the unlucky 99 per cent, scramble for the spoils, unprotected by unions. What kind of unequal society would this be? If, as Kant and others have said, the principles of justice are more valuable than, say, economic growth or efficiency, then doesn’t this mean, contrary to old Plato, that in the AI robotics age the political matter of deciding what should keep each person in their place, with their fair share, should be guided by the vision of a society committed to the improvement of all peoples’ lives, wherever they live and whatever their expectations of life, past and present and future?

11 Democracy, Democracy (Democracia)

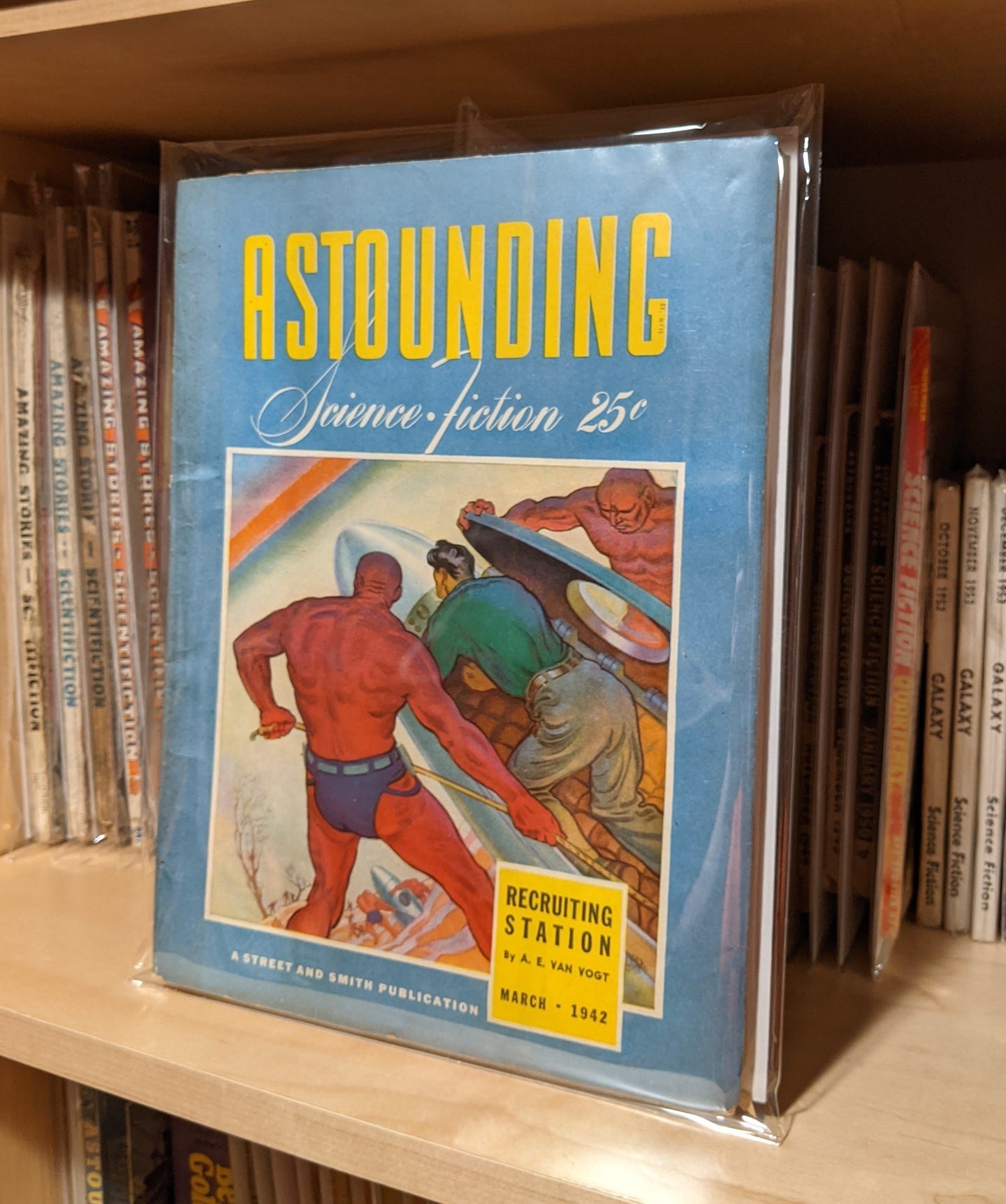

When reading Winterson’s 12 Bytes, readers interested in democracy will be disappointed. The book contains no more than a fleeting recognition that the whole subject of democracy is unavoidably important when it comes to the matter of artificial intelligence and smart robots. Just consider for a moment Russian-born Isaac Asimov’s famous short story ‘Runaround’. Published in 1942 and set in the year 2015, it proposed Three Laws of Robotics: engineering safeguards and built-in ethical principles that Asimov would later use in dozens of his stories and novels. They were: 1) A robot may not injure a human being or, through inaction, allow a human being to come to harm; 2) A robot must obey the orders given it by human beings, except where such orders would conflict with the First Law; and 3) A robot must protect its own existence as long as such protection does not conflict with the First or Second Laws.

Astounding Science Fiction (March 1942) featuring Isaac Asimov’s Runaround

Asimov’s Three Laws are today frequently cited and praised – Winterson doesn’t mention them - but their anti-political silence about the dangers of unaccountable power is striking. Who exactly are the ‘human beings’ licensed to issue ‘orders’ to us, we can ask? Who authorised their power to do so? What counts as ‘harm’ to ‘human being’ and ‘robot’ alike? Through which ‘human’ institutions and procedures are disputes about the meaning and violation of the harm principle best handled? What about alternative sets of ethical principles for governing our relations with humans? Sadly, sage Asimov didn’t answer these inescapably political questions. Nor does Winterson. ‘Accountability matters’, they write, but the ethics and vital procedures for ensuring that those who exercise power are held accountable for their actions remain altogether fuzzy.

But why is the matter of democracy vital in discussions about artificially intelligent machines and machine learning? As I have tried to explain when reconsidering Winterson’s book and matters such as power and anthropomorphism, it’s not just that democracy helpfully enables people, networks and organisations to stir up controversies and imaginings of new scenarios and hopes for practical alternatives, or that it reminds us of our own ‘human’ contingency, and the contingency of everything in the world around us, including our relationship with the ecosystems in which we dwell, or that democracy protects people against the possibility of losing themselves in corporate and government smoke and mirrors, all the while complaining that ‘AI’ is gradually destroying our capacity for thinking, and sapping our emotions. The human invention called democracy goes well beyond all that. It has a punk quality. It is not a synonym for elections, or ‘communicative reason’ (pace Adela Cortina, for whose broad defence in Ethics or Ideology of Artificial Intelligence? [2024] of the ‘public use of reason, free and inclusive’ and ‘cordial autonomy’ I otherwise have considerable sympathy). In its commitment to the equalisation of life chances, democracy prompts us to think in precautionary terms, to see that concentrated power in a few hands is both unnecessary and potentially evil, that there are public benefits that flow from the active refusal of arbitrary power, and from efforts to redistribute and equalise its execise. Considered as a political ideal - the most radical political ideal yet invented - democracy warns that corporate AI coders and their intelligent machines can become foot servants and viziers in the courts of despotic power. More positively, the spirit and substance of democracy can help us break the bad habit of pre-political thinking that’s still so evident in so much present-day public discussion of AI. With the help of intellectuals, journalists, elected representatives and citizens who invest their time and energy into bodies such as the Data & Society Research Institute, AlgorithmWatch, Algorithmic Justice League, and the AI NOW Institute, democratic politics can enable us to reassess and take advantage of the unfinished AI revolution, for instance by placing on the public agenda such matters as the ownership and control and taxation of automated machines, corporate tax evasion, worsening social inequality, the protection of children’s rights, and the need for a redistribution of wealth and life chances through the reduction of working time and citizens’ basic income schemes.

The democratisation of artificial intelligence is inescapably messy business. There are already important fightbacks and breakthroughs, especially in the field of law. In search of major pay outs, for instance, publishers have launched a wave of lawsuits against AI companies; authors recently won a class-action lawsuit against Anthropic, which agreed to pay at least $1.5 billion, or approximately $3,000 per book. There are also deepening public controversies about whether, how and to what extent AI robots should be regulated. Think of the controversies aroused by the steady application of AI tools to courtrooms – would these tools remove prejudices from legal proceedings or are they trained on data that will just reproduce biases? Will they reduce workloads and costs? If judges are replaced by algorithmic decision making, will publics lose confidence in the courts? - or the public backlashes triggered by the draft recommendations of the European Parliament AI Act legal affairs committee (2017) to grant robots the legal status of ‘electronic persons’, persona ficta potentially endowed with rights to define and protect themselves in courts of law when disputes arise concerning the damages they cause, or the harms they suffer as non-sentient powerful tools. Opponents insist that by granting legal personhood for robots, manufacturers would merely absolve themselves of responsibility for the actions of the machines they produce and sell. The world can expect many more of these kinds of disputes, and that’s no bad thing when it comes to democracy simply because most of the AI robotics revolution and its tools aren’t subject to political regulation.

12 Living on Planet Earth (Planeta tierra)

Winterson contends that since there’s no hi-tech solution to human stupidity and greed, we humans consciously ‘begin to seek a trans-human, and eventually a post-human future’. Compare the contrasting straightforward advice of the wily Irish playwright Samuel Beckett to his fellow humans in his absurdist one-act play Endgame (1957): ‘Use your head, can’t you, use your head, you’re on Earth, there’s no cure for that!’ Building on this point, we could say that there’s an endgame for which our AI/robotics revolution offers no solution. It’s simple: whole societies on our planet are beginning to learn that people live on Earth, that it’s mandatory for them to learn that they must return to Earth, to reconnect with their ecosystems by paying attention to the co-dependence of the so-called human and the non-human. Living on planet Earth means caring and doing something about rising land, sea and air temperatures, extreme weather events, the destruction of species, the fouling of our earthly nests. Some AI tools - weather satellites, monitoring the breeding patterns and movements of endangered species, for instance - may helpfully come to our rescue, even though training cutting-edge tools and the operation of data centres such as Stargate require the consumption of enormous quantities of water and electricity. But Winterson’s 12 Bytes is evidently not much interested in either these tools or the big challenge of how to live well on Earth because it instead calls for an end to homo sapiens. It fantasises a ‘post-human future’. The book joins hands with goth queer thinkers such as Patricia MacCormack (author of Ahuman Manifesto), who are convinced that time’s up for humans, and with tycoons such as Elon Musk, who urge our planet to fly onwards and upwards towards a bright world of ‘singularity’ where humanity leaves behind its own self-destructive filth. In fairness, 12 Bytes does make passing references to climate catastrophes and ‘low carbon solutions’ but, overall, it prefers to indulge the fantasy of life in a disembodied future ‘metaverse’ of peace, cooperation and love. Here Winterson’s well-known Pentecostalism makes its presence felt: the vision of a ‘post-human future’ is a sublimated, this-worldly version of baptism with the Holy Spirit, a moment of Rapture when (for Christians, at least) Jesus returns and the Saved are swept into eternal life. This strange vision of a post-human metaverse is in effect a call for us to experience a secular form of religious conversion laced with the ability to receive visions and dreams, to prophesy the future, to heal by casting out demons, and to perform miracles, including raising people from the dead. There’s more than a touch of faith healing in Winterson’s predictions. Robots ‘will act as transitional objects for humans as we move towards pure AGI’, says 12 Bytes. ‘Nothing need be lost in a reality that is not biological’, it continues. ‘Robots will expand our definition of what is alive-ness. And return us to what is a richer understanding of the interplay and interdependence between embodiment and non-embodiment.’ Artificial general intelligence will supposedly jump start a redefinition of ‘what it means to be a human being…Our place. Our purpose. Even our embodied-ness.’ We will eventually be able to let go of our bodies, the book predicts. Except, of course, that for the foreseeable future the bodies of 8.14 billion people currently living on our degraded planet, one-third of whom have still never been online, are going nowhere, and certainly neither to Meta nor Mars.